When we want to manage a remote PC, we usually connect to an SSH server listening on this machine and login with our username and password.

However, when connecting to your common household PC, this would require a certain amount of set-up beforehand:

- We need the home router’s external IP which usually changes frequently. Therefore, we would need to have a Dynamic DNS (DDNS) server set up.

- We need to have the SSH server port open on the user’s home router and redirected to the user’s PC.

Therefore, and as a simplified and more secure option, we can use an exposed SSH server as a middle man. The user’s PC can connect to it, start a reverse shell and allow us to connect back from our local machine.

]]>When we want to manage a remote PC, we usually connect to an SSH server listening on this machine and login with our username and password.

However, when connecting to your common household PC, this would require a certain amount of set-up beforehand:

- We need the home router’s external IP which usually changes frequently. Therefore, we would need to have a Dynamic DNS (DDNS) server set up.

- We need to have the SSH server port open on the user’s home router and redirected to the user’s PC.

Therefore, and as a simplified and more secure option, we can use an exposed SSH server as a middle man. The user’s PC can connect to it, start a reverse shell and allow us to connect back from our local machine.

An explanation

In this scenario, we have to define a few items so we can understand the process better:

- Remote PC: The machine we want to access and give support to (e.g.

bob-pc). - Remote user: The user on this remote PC that will run the necessary

commands to allow us to connect to the PC (e.g.

bob). - Proxy server: A server that has a static IP address or a known hostname and

an SSH server exposed to the internet (e.g.

vpn.example.org). - Restricted user: A user on this proxy server with restricted privileges to

which the remote user will connect to (e.g.

rhelp). - Local machine: Our local workstation from which we will connect to the remote PC via the proxy server.

Step by step, the process would be:

- The remote user connects to our proxy server’s SSH, logging in as a restricted user.

- When connected, it redirects port 44000 on the proxy server to port 22 (SSH) on its own PC (i.e. the remote PC).

- From our local machine, we connect to the proxy server’s SSH and then jump to the proxy server’s port 44000.

- This port will redirect us to the remote PC’s port 22 (SSH), where we login and get a working shell.

It’s worth noting that the proxy server and the local machine can be the same, as long as the requirements are met.

Reverse shell

Configuring the proxy server

In our proxy server, first we will create a passwordless restricted user with very few privileges:

useradd -c 'Restricted User' -m -s '/bin/false' -u 22000 -U rhelp

Passwordless login

If we have the remote user’s SSH public key and we want them to login

without a password, we can just add it to our restricted user’s

authorized_keys file:

cat <<- 'EOF' > ~rhelp/.ssh/authorized_keys

ssh-rsa AAAAB3NzaC1yc2EAAAADAQABAAABAQCr3GtrUvnWfOyhy5BaaKMUj62lHf3O3caS1FJidSaaG5qtZDwqL6MKGAOgtmt+krJCRp8yT6uKkYYYBHlugOE9Es8LibuxdFT/LHViAWAbtINOKOIzzrC26R7xseNe1VXEEoH8+2QnrH2U1C9D687rIptanGcvkwzj8yOFBVVMIl6Ldhs38r9xpi04Y3SPQl5duTa2CebuICLha1xbS0h9HSOIGQNEeRYtj1Te44fbaWkM/Fg2inA4QLKonWObUDTwYjede9lDaPXSWGUEQz4A2u+ljjGRbxPaarj7HPsnnlpLnQzRZzvjVq1dC3h8swE4Qx8pvImzte4OacUS8vbT bob@bob-pc

EOF

The best part about using a key for login is that we can limit what the remote user can do when connecting via SSH. We just want to allow it to forward ports 44000 (for SSH) and 5900 (for VNC). For this, we have to enter the following in front of the authorized key:

restrict,port-forwarding,permitlisten="localhost:44000",permitlisten="localhost:5900"

So our restricted user’s authorized_keys file would look like this:

restrict,port-forwarding,permitlisten="localhost:44000",permitlisten="localhost:5900" ssh-rsa AAAAB3NzaC1yc2EAAAADAQABAAABAQCr3GtrUvnWfOyhy5BaaKMUj62lHf3O3caS1FJidSaaG5qtZDwqL6MKGAOgtmt+krJCRp8yT6uKkYYYBHlugOE9Es8LibuxdFT/LHViAWAbtINOKOIzzrC26R7xseNe1VXEEoH8+2QnrH2U1C9D687rIptanGcvkwzj8yOFBVVMIl6Ldhs38r9xpi04Y3SPQl5duTa2CebuICLha1xbS0h9HSOIGQNEeRYtj1Te44fbaWkM/Fg2inA4QLKonWObUDTwYjede9lDaPXSWGUEQz4A2u+ljjGRbxPaarj7HPsnnlpLnQzRZzvjVq1dC3h8swE4Qx8pvImzte4OacUS8vbT bob@bob-pc

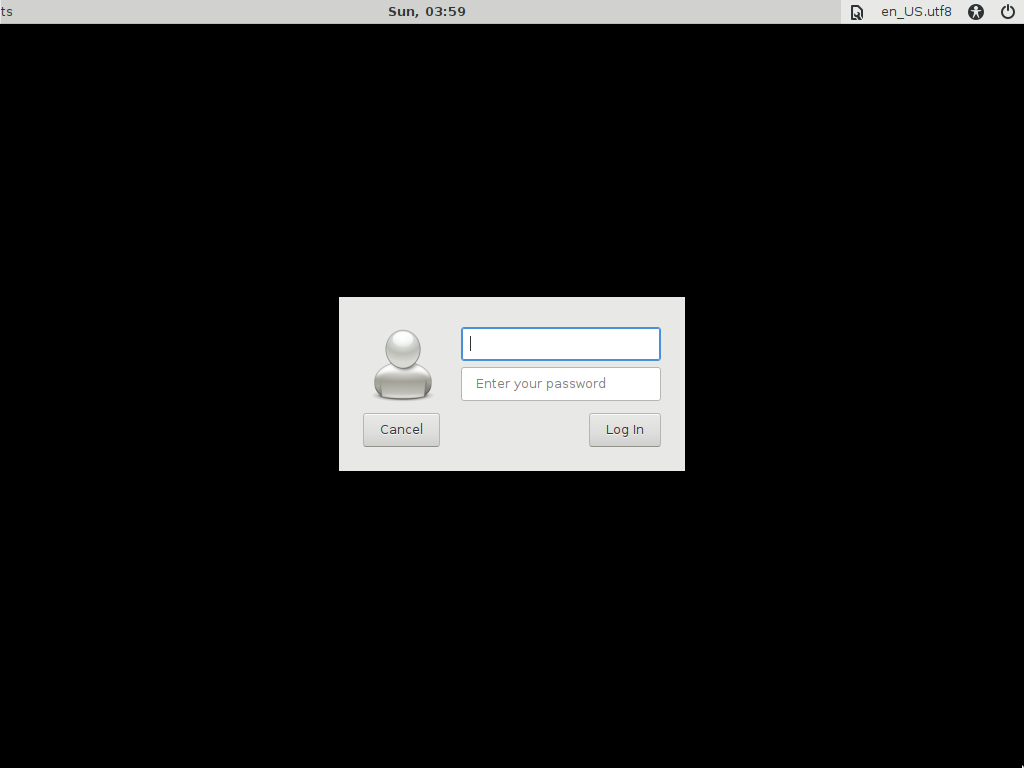

Normal login

If we don’t have the remote user’s SSH public key, we can set a password for our restricted user so the remote user can login manually for the first time:

passwd rhelp

New password:

Retype new password:

passwd: password updated successfully

We might also need to enable password authentication in the SSH server:

cat <<- 'EOF' >> /etc/ssh/sshd_config

PasswordAuthentication yes

EOF

After we restart SSH’s service, the remote user should be able to login with

username and password so we can proceed to connect to the remote PC and create

an SSH key pair or copy an existing one to our restricted user’s

authorized_keys.

Afterwards, we can remove the password of the restricted user by running:

passwd -l rhelp

passwd: password expiry information changed.

And also disable password authentication in the SSH server’s configuration:

sed -i '/^PasswordAuthentication yes/d' /etc/ssh/sshd_config

Connecting from the remote PC

With the proxy server properly set up, we can ask the remote user to login from their PC and forward the appropriate ports. They can do so with the following commands:

ssh -v -C -N -R 5900:localhost:5900 -R 44000:localhost:22 rhelp@vpn.example.org

The meaning of the options are:

-v: Verbose mode.-C: Request compression of all data.-N: Do not execute a remote command.-R 5900:localhost:5900: Forward port 5900 on remote server to port 5900 on the local side.-R 44000:localhost:22: Forward port 44000 on remote server to port 22 on the local side.

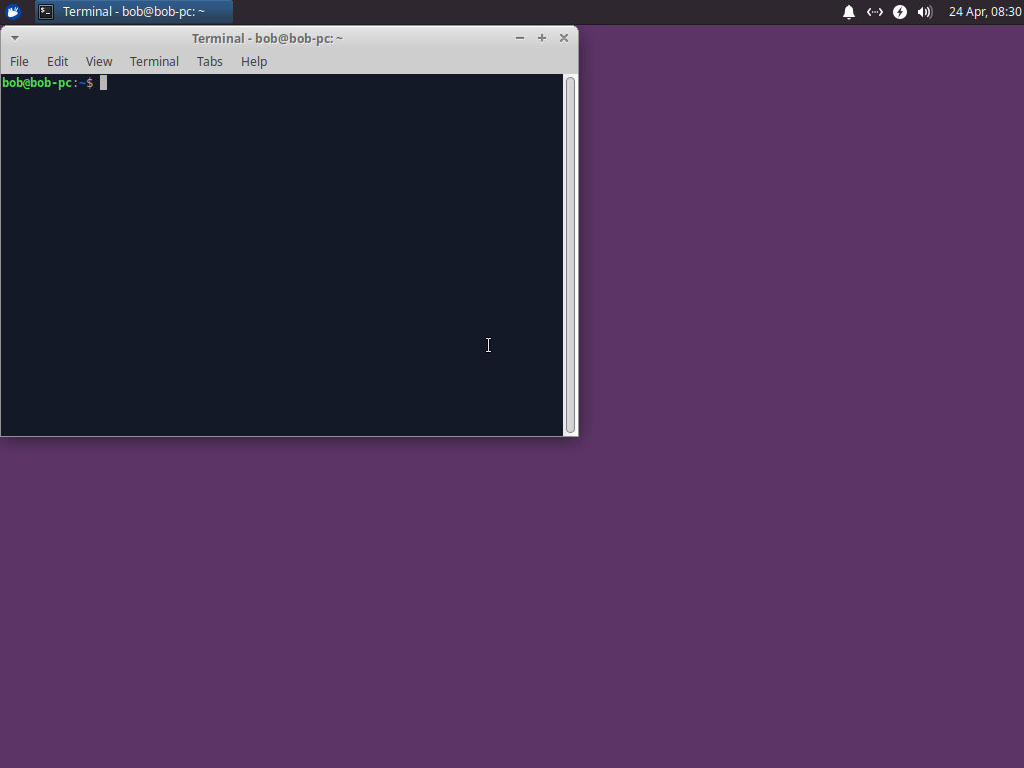

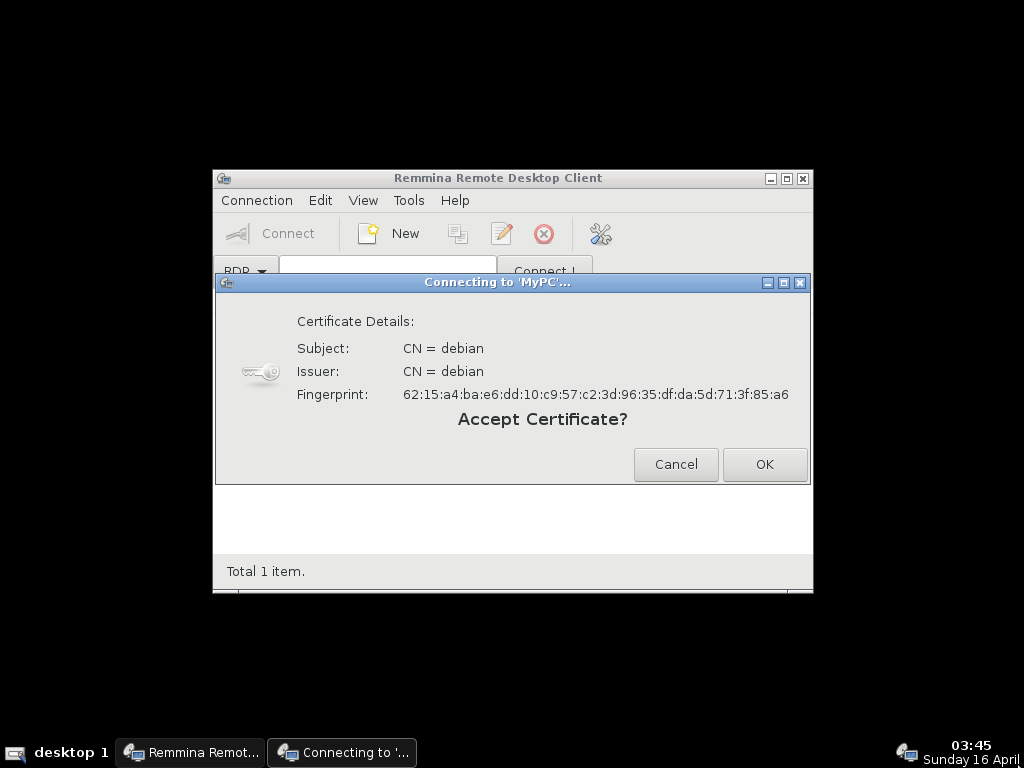

The first time, the remote user will have to enter the command manually on a terminal window, accept the host key and, if not using an authorized key, enter the restricted user’s password manually.

Connecting to the remote PC

From our local machine, we can check whether the remote user has connected to the proxy server by running:

ssh vpn.example.org -- ss -tnlp | grep '127.0.0.1:44000'

Once the remote user is connected to the proxy server, we can connect to the remote PC from our local machine. We need to use the proxy server as a jump host:

ssh -J vpn.example.org localhost -p 44000

We can add all these and more options in the configuration file for SSH:

cat <<- 'EOF' >> ~/.ssh/config

Host bob-pc

Compression yes

Hostname localhost

LocalForward 45900 localhost:5900

Port 44000

ProxyJump vpn.example.org

ServerAliveInterval 30

EOF

The options are:

Compression yes: Specifies whether to use compression.Hostname localhost: Specifies the hostname we want to connect to.LocalForward 45900 localhost:5900: Specifies that port 45900 on the local machine will be forward to port 5900 on the remote host.Port 44000: Specifies the port number we want to connect to.ProxyJump vpn.example.org: Specifies the jump proxy in the form[user@]host[:port].ServerAliveInterval 30: Sets a timeout interval after which ssh will request a response if no data has been received from the server.

With these, when we want to connect to the remote PC again, we can simply run:

ssh bob-pc

If our username and its key are authorized to login on both the proxy server and the remote PC, we will be automatically logged in the remote PC, ready to enter commands. Otherwise, we will have to use the appropriate passwords when prompted.

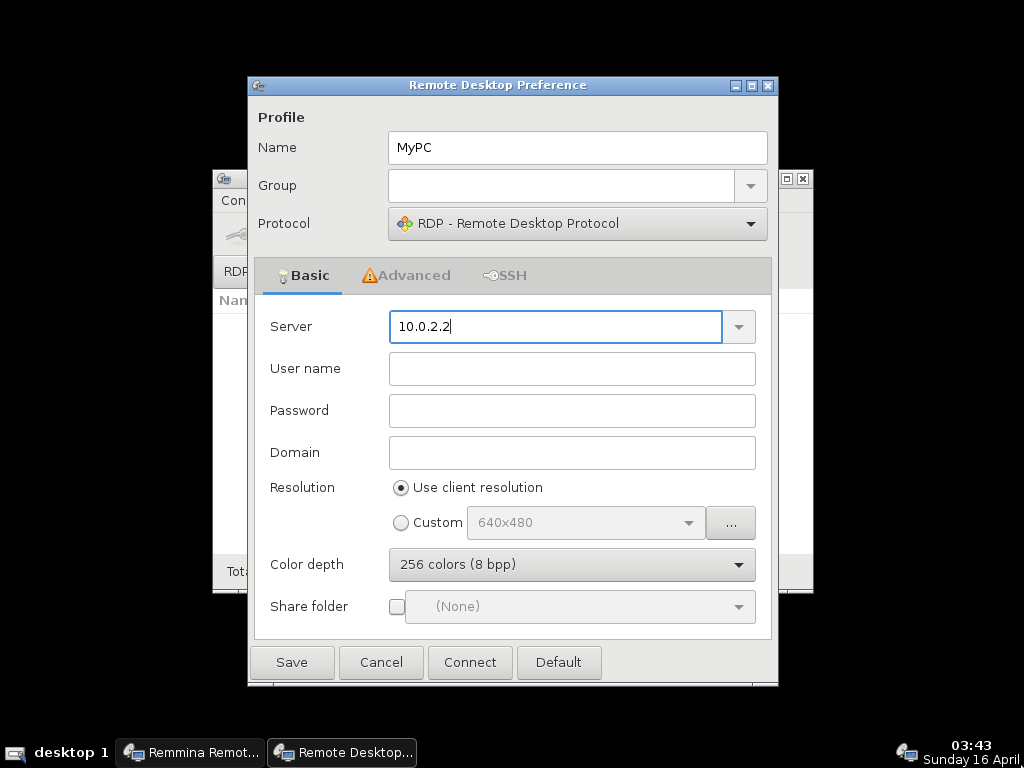

Remote graphical desktop

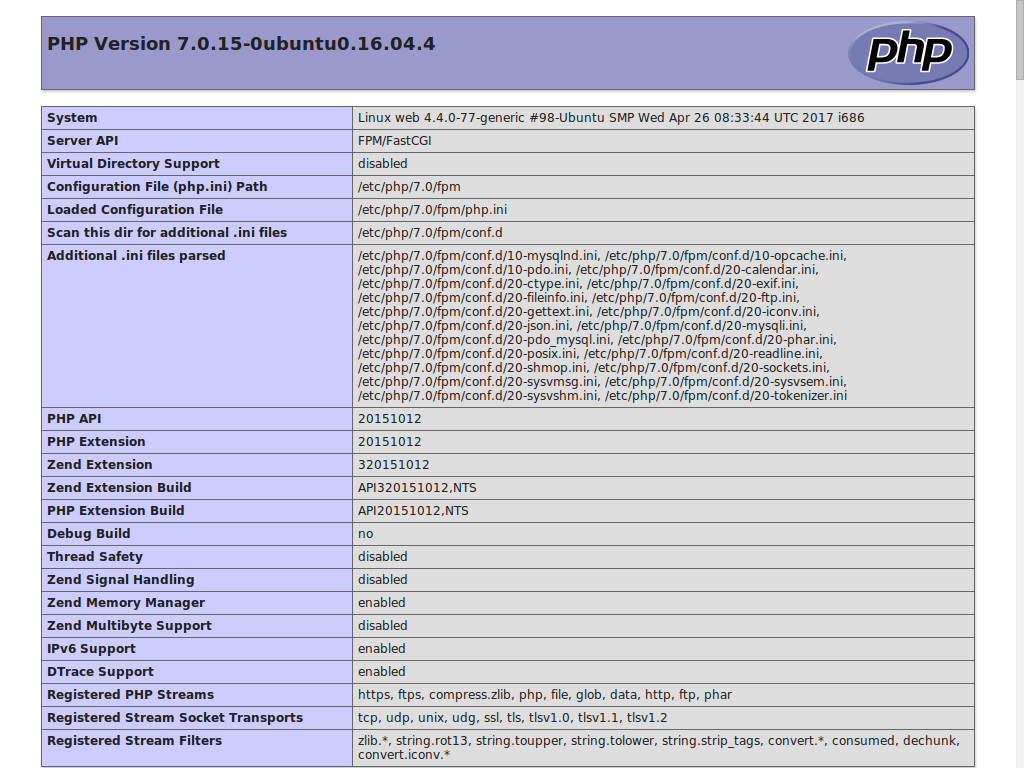

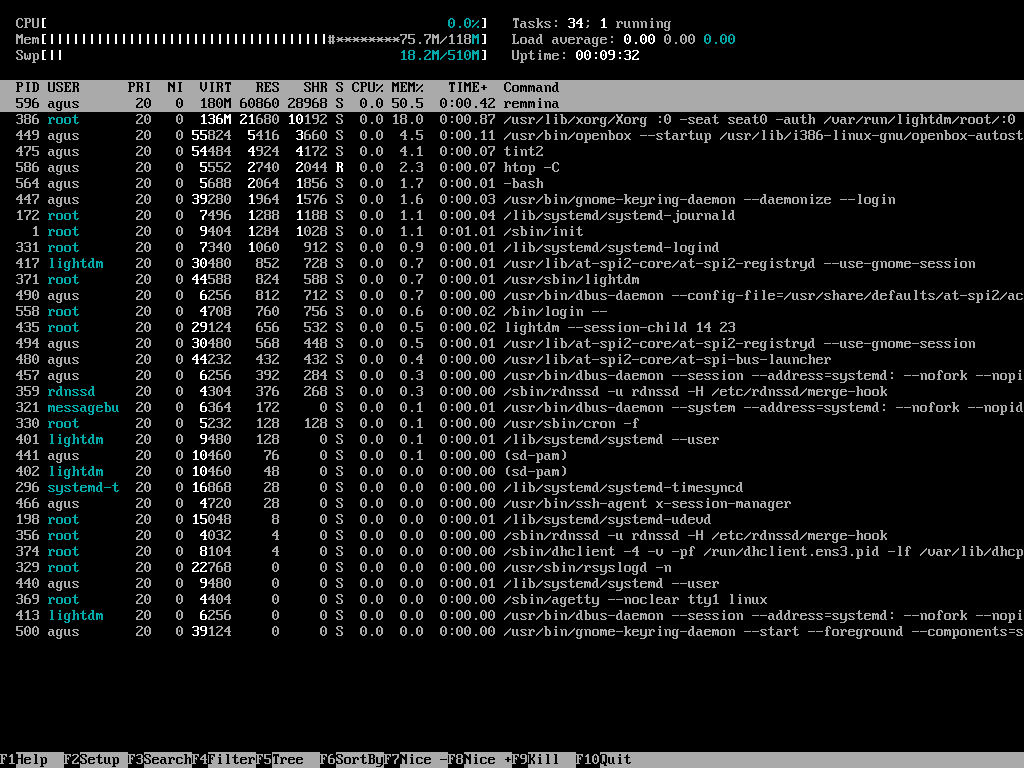

If we want to see the remote user’s desktop and provide support for graphical applications, we will need to use VNC. More specifically, we will have to run x11vnc on the remote PC:

x11vnc -display ':0' -verbose -localhost -forever -auth guess -nopw

The meaning of the options are:

-display ':0': X11 server display to connect to.-verbose: Print out more information.-localhost: Allow connections from localhost only.-forever: Keep listening for connections when a client disconnects.-auth gues: Try to guess the XAUTHORITY filename and use it.-nopw: Disable the warning message when not using a password.

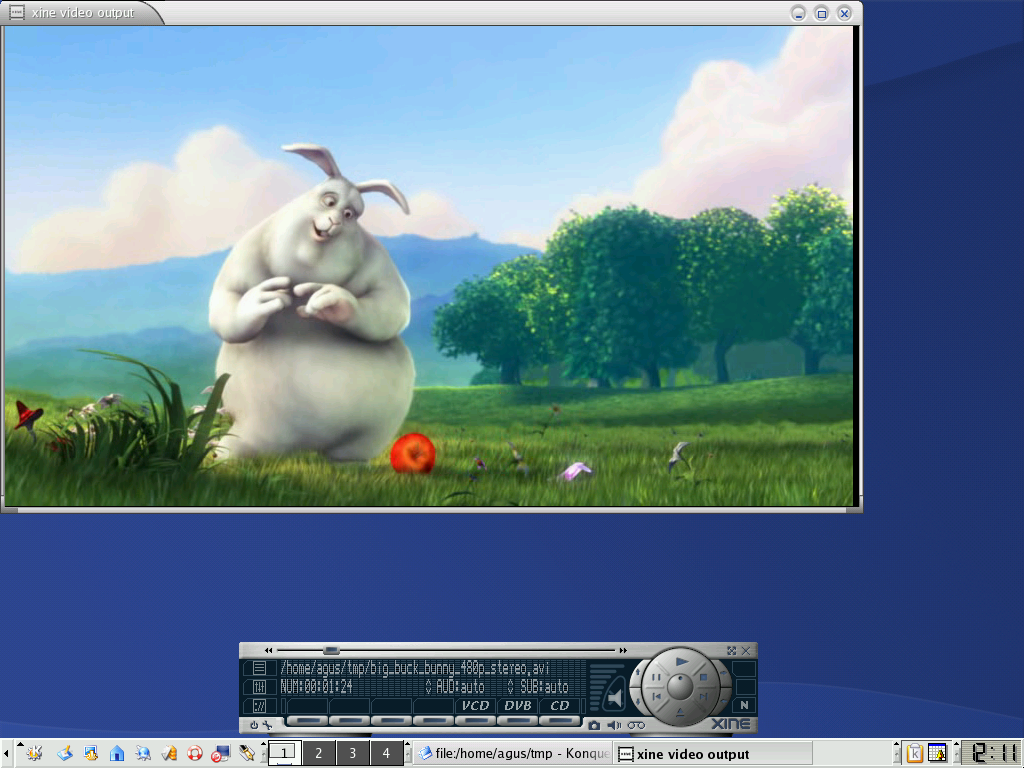

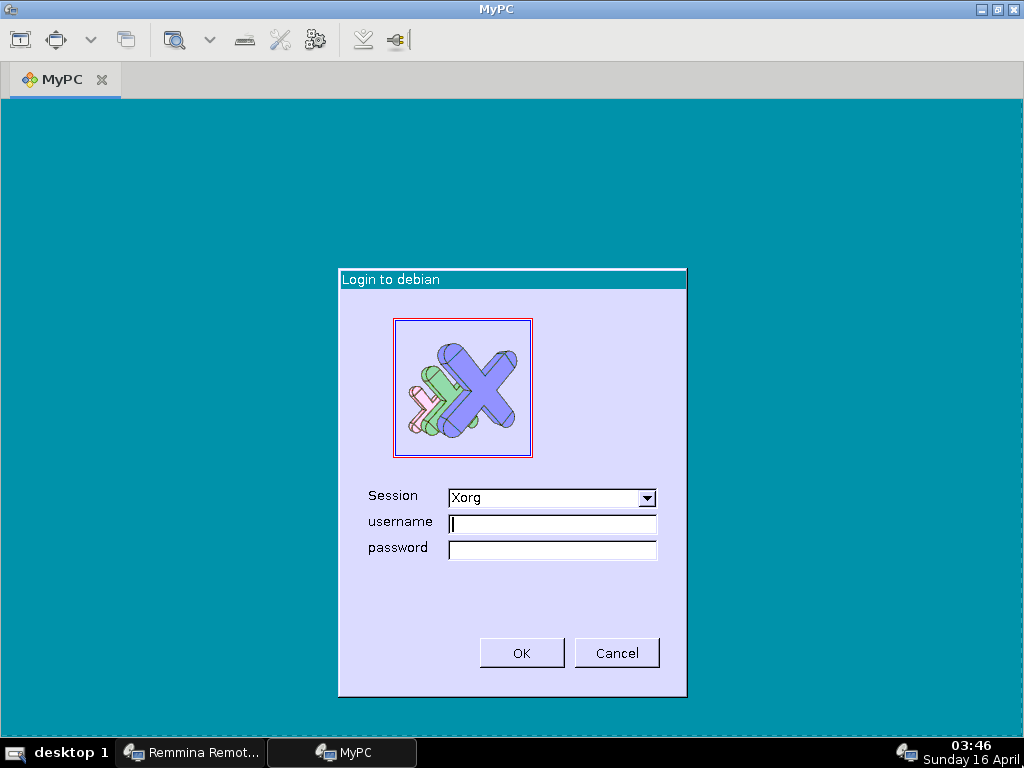

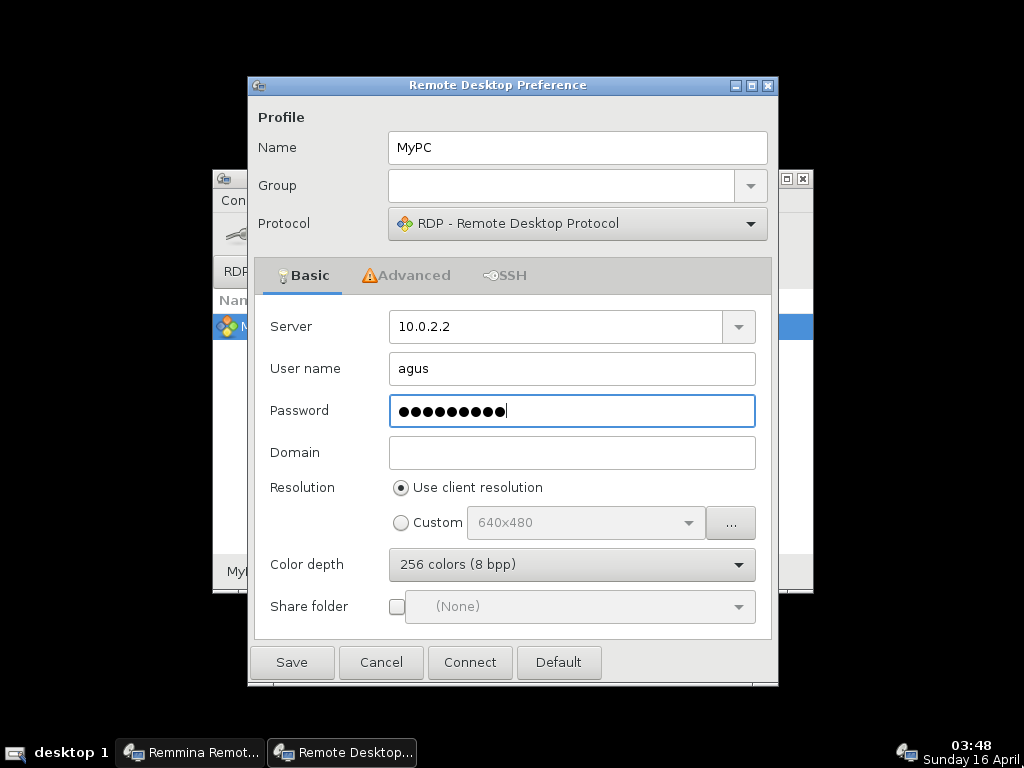

Now we just have to run a VNC viewer on our local machine to connect to the forwarded port and have access to the desktop on the remote PC:

vncviewer localhost:45900

Just like that, we will be able to see and control the remote user’s desktop:

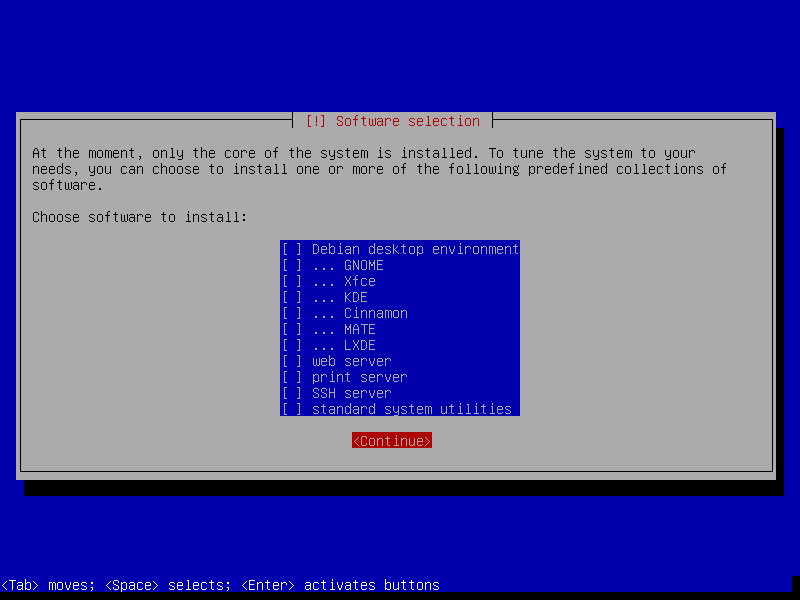

Configuring the remote PC

Making it easier

Once we are connected to the remote user’s PC, we can make it easier for them to activate the reverse shell by creating a file on their desktop:

cat <<- 'EOF' > ~bob/Desktop/SSH.desktop

[Desktop Entry]

Version=1.0

Type=Application

Name=SSH

Comment=Connect to receive remote support via SSH

Exec=/usr/bin/ssh -v -C -N -R 5900:localhost:5900 -R 44000:localhost:22 rhelp@vpn.example.org

Icon=gnome-terminal

Terminal=true

Categories=Application;

EOF

Also, we create a similar file for the remote user to activate the VNC server for remote graphical support:

cat <<- 'EOF' > ~bob/Desktop/VNC.desktop

[Desktop Entry]

Version=1.0

Type=Application

Name=VNC

Comment=Connect to receive remote support via VNC

Exec=/usr/bin/x11vnc -verbose -localhost -forever -auth guess -nopw

Icon=gnome-remote-desktop

Terminal=true

Categories=Application;

EOF

Let’s make these files executable so they can be launched by double-clicking on them:

chmod +x ~bob/Desktop/{SSH,VNC}.desktop

The remote user will see two new icons on the desktop:

With these, the remote user can manually start the reverse shell and the VNC server. Once started, we will be able to connect from our local machine to the remote PC. When the remote user wants to regain control and disconnect us from their PC, they can just close the SSH or VNC windows to do so.

Permanent reverse shell

If we need to have permanent access to the remote PC and the remote user has given us authorization to do so, we can set up a service so the SSH connection is resumed every time the remote PC boots up.

Using systemd

If we have systemd on the remote PC, this can be easily achieved by creating a service file:

cat <<- 'EOF' > ~bob/.config/systemd/user/reverse-ssh.service

[Unit]

Description=Reverse SSH connection

After=network.target

[Service]

Type=simple

ExecStart=/usr/bin/ssh -o 'ExitOnForwardFailure=yes' -o 'ServerAliveInterval=30' -C -N -R 5900:localhost:5900 -R 44000:localhost:22 rhelp@vpn.example.org

Restart=always

RestartSec=5s

[Install]

WantedBy=default.target

EOF

Alternatively, we can use autossh instead since it is designed precisely for this purpose:

cat <<- 'EOF' > ~bob/.config/systemd/user/reverse-ssh.service

[Unit]

Description=Reverse SSH connection

After=network.target

[Service]

Type=simple

Environment="AUTOSSH_GATETIME=0"

ExecStart=/usr/bin/autossh -M 0 -o 'ExitOnForwardFailure=yes' -o 'ServerAliveInterval=30' -C -N -R 5900:localhost:5900 -R 44000:localhost:22 rhelp@vpn.example.org

ExecStop=/bin/kill $MAINPID

Restart=always

RestartSec=5s

[Install]

WantedBy=default.target

EOF

We will have to reload the list of services and enable the new service:

systemctl --user daemon-reload; systemctl --user enable reverse-ssh.service

With this, every time the remote user logs into the remote PC, a reverse

shell will be started automatically to the proxy server. If we want this

service to be started immediately after boot, whether the remote user is

logged in or not, we will have to enable the automatic start-up of systemd

instances for the remote user (e.g. bob):

loginctl enable-linger bob

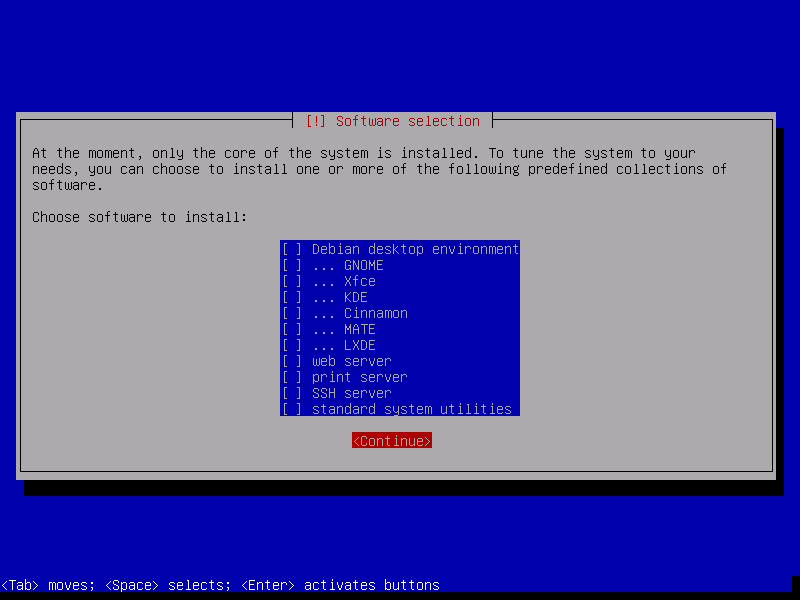

Using cron

If we don’t have systemd installed on the remote PC, we can simply use

cron. For this, we run crontab -e and add the following:

@reboot while true; do ssh -o 'ExitOnForwardFailure=yes' -o 'ServerAliveInterval=30' -C -N -R 5900:localhost:5900 -R 44000:localhost:22 rhelp@vpn.example.org; sleep 5; done

If we opt for autossh, we must enter the following instead:

@reboot autossh -M 0 -o 'ExitOnForwardFailure=yes' -o 'ServerAliveInterval=30' -C -N -R 5900:localhost:5900 -R 44000:localhost:22 rhelp@vpn.example.org

References

Blog posts:

Gists:

- AutoSSH reverse tunnel service config for systemd · GitHub

- Systemd service for autossh · GitHub

- Setup a secure (SSH) tunnel as a systemd service. #systemd #ssh #ssh-tunnel #ssh-forward · GitHub

Manual pages:

]]>